Author: Tom Fadial, MD (@thame, Assistant Professor of Emergency Medicine, Creator of ddx.com, ecg stampede, harbor edu, i-PBL, EMHouston, Triage Touch, u-sim) // Reviewed by: Manny Singh, MD (@MPrizzleER); Alex Koyfman, MD (@EMHighAK); Brit Long (@long_brit)

As a medical student, I recall working with a preceptor who sent me in to see a patient. After 45 minutes of data gathering, I returned and presented the case. My history was incomplete, the physical examination was incorrectly performed and I synthesized this faulty data into an incorrect assessment and plan.

My preceptor kindly evaluated the patient with me and I was amazed out how different our encounters went. He asked a few key questions, supplemented this with an expertly performed and focused physical examination and arrived at the correct diagnosis – all within minutes.

When I asked about why our experiences were so different, the response was unsatisfying. He suggested that it was just a matter of time and with enough patient encounters and experience I’d be able to do what he did.

I wasn’t ready to wait and I didn’t like the prospect of not having agency in my own progress towards becoming an expert clinician. So, I started trying to understand how the expert clinician thinks and how I could avoid making those errors.

Definition

Metacognition is an awareness of your own thinking and how your thought processes are applies. Understanding how we think can help us get better with medical decision making which is a key contributor to medical errors.

Rates and Types of Errors

Adverse events related to patient care take a variety of forms. One of the more significant contributors are “diagnostic mishaps” (accounting for approximately 10%).1,2

In one study, cognitive errors resulted in death or permanent disability in 25% of cases – of which nearly three quarters were deemed “highly preventable”.3

Error rates are highest among specialties in which patients are diagnostically undifferentiated compared to “visual specialties” (like radiology, pathology, dermatology) where rates are as low as 2%.4

Our specialty features undifferentiated patients, and our decisions (correct or incorrect) impact the immediate and long-term care of patients resulting in significant risk for error but also opportunity to have a tangible, positive impact.

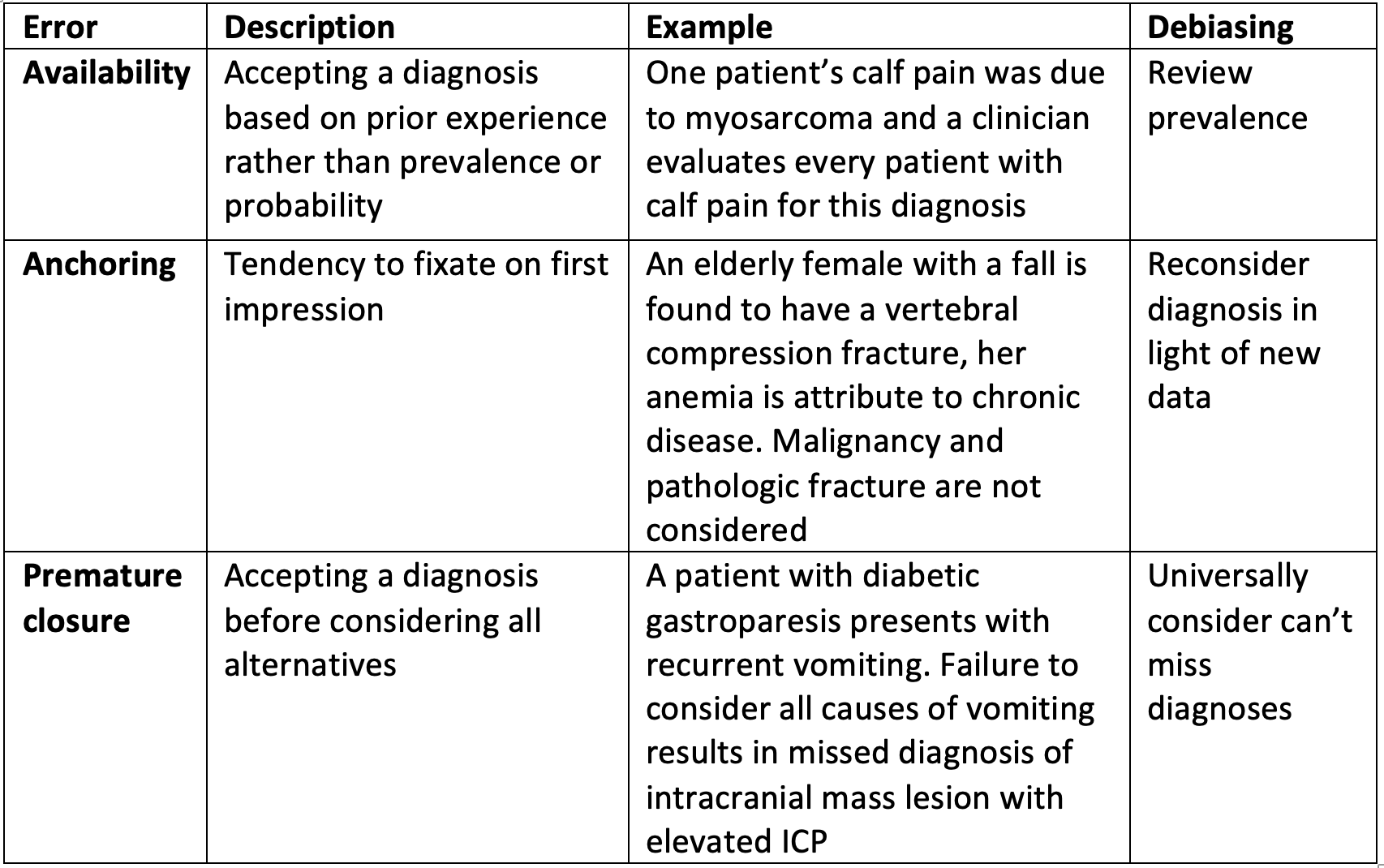

Examples of Errors

How We Think

There are two theorized cognitive processing models, each with pros-and-cons.

Intuitive (System 1)

Features

- Pattern recognition

- Quality dependent on practitioner experience

Caption: You can read this paragraph easily despite the jumbled letters. Your brain uses patterns of word length, start/end letters and context to quickly process the intended meaning.

Analytical (System 2)

Features

- Algorithm-based

- Explicit objective of calculating likelihood of a specific disease process

Comparison6

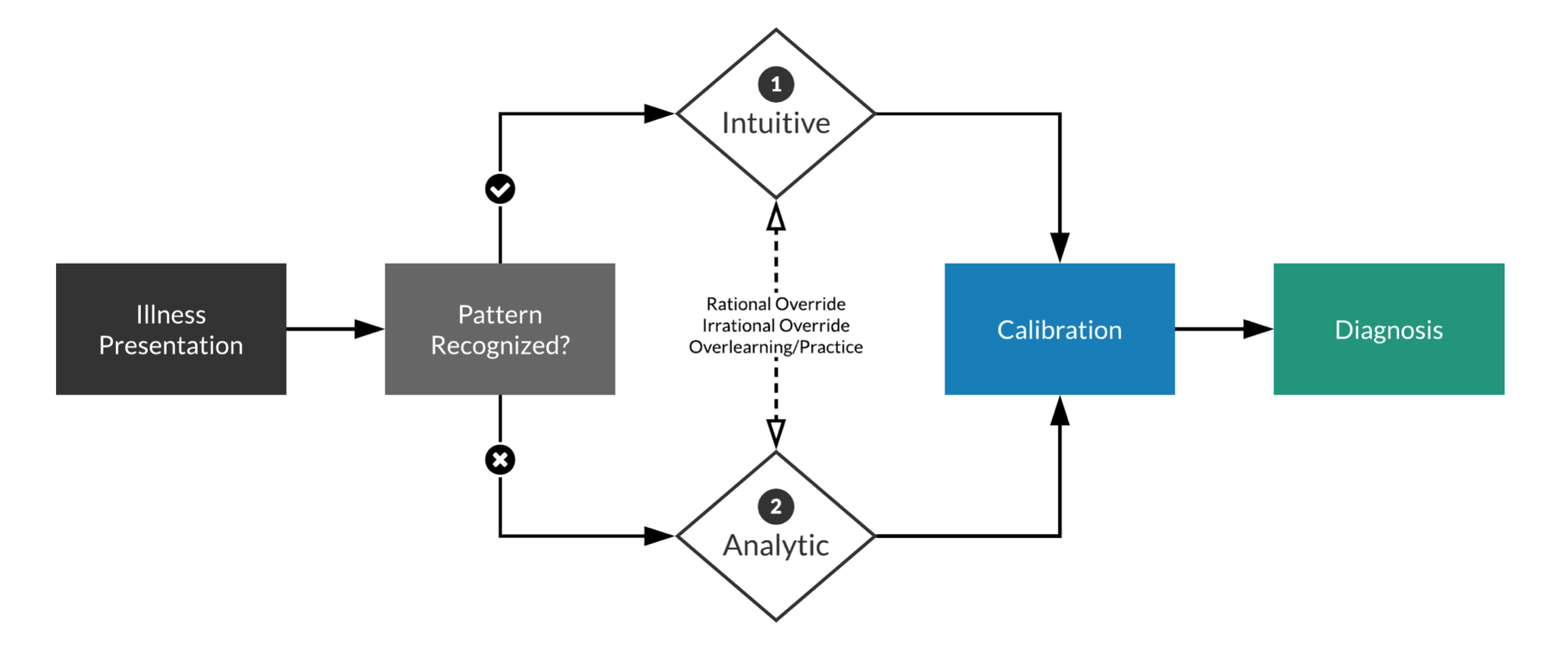

Dual Process Theory

In reality, these processing models occur together – combined termed Dual Process Theory:

- The initial presentation of illness is either recognized or not by the observer

- If it is recognized, the parallel, fast, automatic processes of System 1 engage

- If it is not recognized, the slower, analytical processes of System 2 engage instead

- Repetitive processing in System 2 leads to recognition and default to System 1 processing.

- Either system may override the other: inattentiveness, distraction, or fatigue may diminish System 2 surveillance and allow System 1 more latitude

Our Specialty

In emergency medicine, these processes are still more intricately linked. System 1 and system 2 processes are initiated in parallel and may recur during the ED course of a single patient. We may use system 1 processing to identify a broad category describing a particular presentation (ex. respiratory failure), a necessary step to initiating emergent stabilization measures. The bought time then allows for a more detailed assessment, utilizing system 2 processes to refine the diagnosis (ex. flash pulmonary edema) and institute targeted therapy. An unexpected decompensation may trigger the entire process to start over.

Further, these two processes may be engaged even within the scope of a single task such as ECG interpretation where rapid, system 1 processing excludes STEMI while system 2 evaluates for specific processes (when evaluating the ECG of a patient with syncope, for example).

Other emergency medicine-specific complications include a higher decision density, interruptions/distractions and surge phenomena.

Opportunities for Improvement

While system 1 processing may be more prone to error, it remains a critical skill of the emergency physician. Further, it is a necessary and prized capability of the human brain.

Our goal is instead to prime system 1 processing with intentional system 2 algorithm generation. Creating system 2 constructs combined with purposeful and repeated application in real-world scenarios allows for active and auditable calibration of system 1 processes and may provide a pathway for deliberate progress towards clinical expertise.

ddxof.com

After the session with my preceptor, I went home and created my first algorithm. Since then, I’ve created over 133 algorithms for the evaluation and management of emergency medicine topics – each prompted by a real patient or case where I found my system 1 processing to be incomplete, possibly biased, or insufficiently calibrated. ddxof.com content is available online or on your mobile device for free.

References:

- Leape LL, Brennan TA, Laird N, Lawthers AG, Localio AR, Barnes BA, et al. The nature of adverse events in hospitalized patients. Results of the Harvard Medical Practice Study II. N Engl J Med. 1991 Feb 7;324(6):377–84.

- Thomas EJ, Studdert DM, Burstin HR, Orav EJ, Zeena T, Williams EJ, et al. Incidence and types of adverse events and negligent care in Utah and Colorado. Med Care. 2000 Mar;38(3):261–71.

- Wilson RM, Harrison BT, Gibberd RW, Hamilton JD. An analysis of the causes of adverse events from the Quality in Australian Health Care Study. Med J Aust. 1999 May 3;170(9):411–5.

- Croskerry P. From mindless to mindful practice–cognitive bias and clinical decision making. N Engl J Med. 2013 Jun 27;368(26):2445–8.

- Mele AR. Real self-deception. Behav Brain Sci. 1997 Mar;20(1):91–102–discussion103–36.

- Croskerry P. A universal model of diagnostic reasoning. Acad Med. 2009 Aug;84(8):1022–8.

2 thoughts on “Metacognition: Why I make algorithms”

Very good story, very motivating, excellent contribution, I always use algorithms. Thank you.

Love the app