Originally published on Ultrasound G.E.L. on 7/6/20 – Visit HERE to listen to accompanying PODCAST! Reposted with permission.

Follow Dr. Michael Prats, MD (@PratsEM) from Ultrasound G.E.L. team!

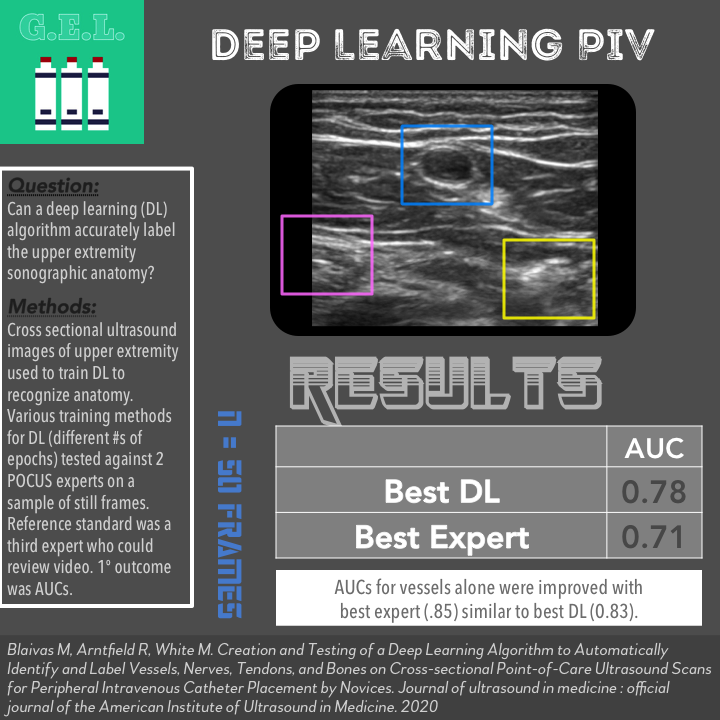

Creation and Testing of a Deep Learning Algorithm to Automatically Identify and Label Vessels, Nerves, Tendons, and Bones on Cross-sectional Point-of-Care Ultrasound Scans for Peripheral Intravenous Catheter Placement by Novices

J Ultrasound Med March 2020 – Pubmed Link

Take Home Points

1. A deep learning algorithm was able to identify sonographic anatomy from images of normal upper extremities with accuracy similar to a POCUS expert.

2. More research is needed to test deep learning on actual patients compared to clinical interpretations.

Background

Deep learning is the part of artificial intelligence in which a computer is taught to perform a task by submitting large amounts of data to a network composed of algorithms. Basically, you teach the computer by example and then it can subsequently “learn” to function by itself. This is being used in many different areas of technology, one of which is in medical imaging. While it may seem frightening to trust a computer to read a CT scan or MRI, there are the potential advantages of high precision and unparalleled speed. This is actually already being done in many cases and is becoming more prevalent in the world of ultrasound. This article cited a study showing that deep learning does quite well in calculating ejection fractions . Nonetheless, we are still in the early stages of making sure this is safe. This article takes the next step in moving this towards clinical practice – can deep learning accurately identify the anatomy necessary to guide an ultrasound-guided vascular access procedure?

Question

Can deep learning accurately label the upper extremity sonographic anatomy?

Population

They analyzed musculoskeletal, soft tissue, vascular access, and regional anesthesia ultrasound images and clips

A total of 183,522 images were imported into the training data set

These came from all over the internet

- Image banks

- Internet posted videos

- Vendor videos

Design

Ultrasound images and videos were obtained as above

They were imported into an open-source video labeling software. Apparently this was originally used to help autonomous cars label surroundings.

This software was used to label blood vessels, nerves, bones, and tendons with boxes in these images (every 10 frames). The person who labelled the images was not one of the experts tested for accuracy in this study.

The YOLOv3 (stands for You Only Look Once version 3) deep learning algorithm was used.

Using trial and error, they coded adjustments to YOLOv3 so that it could recognize ultrasound structures.

They modified it to make predictions when it was less certain of the object being identified.

They then obtained new ultrasound videos (not used for training) of the upper extremity. There were four different training methods used which varied on the number of epochs (a single cycle of training through a full data set).

They randomly selected 50 frames for testing by the deep learning algorithm and compared this to two fellowship-trained POCUS experts. The reference standard was a POCUS expert with 25 years of experience (the most experienced author) who reviewed the labels assigned by YOLOv3 for accuracy. The reference author was also able to use the entire video clip to determine the true anatomy.

Primary outcome appeared to be area under the curve (AUC) for the 4 algorithms compared to the 2 POCUS experts. They also looked at identifying blood vessels alone.

Who did the ultrasounds?

Fellowship trained POCUS experts were involved in this study. There was no new image acquisition for the purposes of this study; the images used were obtained from various online sources.

The Scan

Linear transducer

Learn how to do Ultrasound-guided Peripheral IV Access from 5 Minute Sono!

Check out Vascular Anatomy on the POCUS Atlas!

Results

N = 50 frames used to test the algorithm compared to the experts

Primary Outcome – AUC of Deep Learning Algorithms Compared to POCUS Experts

Expert 1 AUC 0.71 (CI 0.64-0.79)

Expert 2 AUC 0.69 (CI 0.61-0.77)

DL 1 AUC 0.78 (CI 0.71-0.85)

DL 2 AUC 0.70 (CI 0.61-0.79)

DL 3 AUC 0.78 (CI 0.71-0.85)

DL 4 AUC 0.76 (CI 0.69-0.83)

Secondary Outcome – AUCs for Vessels alone

Expert 1 AUC 0.70 (CI 0.58-0.82)

Expert 2 AUC 0.85 (CI 0.76-0.94)

DL 1 AUC 0.81 (CI 0.70-0.93)

DL 2 AUC 0.72 (CI 0.59-0.86)

DL 3 AUC 0.83 (CI 0.73-0.93)

DL 4 AUC 0.79 (CI 0.68-0.91)

Limitations

Using only static images to test the experts and algorithm is a limitation. Furthermore, these images were likely optimized since they were obtained by experts. How would this DL algorithm function with lower quality images? What about pathologic images such as if there was a soft tissue abscess in the image?

This seems like a low AUC for POCUS experts compared to another POCUS expert. This makes me question how well these images simulated actual practice where you can dynamically move through objects and look in different planes. It is possible that in actual practice, experts are much better than they appeared in this study.

Identifying anatomy is only one portion of the skill set – potentially the easiest. Identifying the anatomy may help remove one barrier to performed an ultrasound-guided peripheral vascular access, but there are other skills required. Additionally, differentiating artery vs vein can be the most challenging aspect and was not addressed by this algorithm.

The authors keep saying that the DL algorithm “outperformed” the POCUS experts, but the 95% confidence intervals overlap so hard to say one is statistically superior.

Discussion

Authors bring up the good point that this performed exceptionally well considering they just used videos they found on the internet. If there was a larger ultrasound database, that could potentially improve accuracy. The DL algorithm will only be as good as the images used to train it.

These authors are proposing deep learning as a bridge to help training new learners. The elephant in the room is will deep learning ultimately replace the user as the interpreter. Though this is the fear of some, it seems we are far from that at this point. It is more likely that these deep learning innovations will become a tool that can help us within certain limitations. I liken it to automated ECG reading – sometimes its right, sometimes its wrong, sometimes it can do calculations that would take you more time to do. We will just have to learn to integrate these tools into our practice with a healthy amount of distrust.

Take Home Points

1. A deep learning algorithm was able to identify sonographic anatomy from images of normal upper extremities with accuracy similar to a POCUS expert.

2. More research is needed to test deep learning on actual patients compared to clinical interpretations.

More Great FOAMed on this Topic

Point-of-Care Ultrasound Certification Academy Blog Post

AI Image by @DocShannon and seen on Critical Care Northampton

Our score

Cite this post as

Michael Prats. Deep Learning for Peripheral IV Anatomy. Ultrasound G.E.L. Podcast Blog. Published on July 06, 2020. Accessed on January 15, 2021. Available at https://www.ultrasoundgel.org/95.